首先分析url,发现其每页的url的格式为

https://www.kugou.com/yy/rank/home/2-8888.html?from=rank然后就可以通过一句代码进行构造用于采集数据的链接。之后的详细步骤请参考代码。

import requests

from bs4 import BeautifulSoup

import time

headers = {

'User-Agent':'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_12_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/66.0.3359.181 Safari/537.36'

}

def get_info(url):

wb_data = requests.get(url, headers = headers)

soup = BeautifulSoup(wb_data.text,'lxml')

ranks = soup.select('span.pc_temp_num')

titles = soup.select('#rankWrap > div.pc_temp_songlist > ul > li > a')

times = soup.select('#rankWrap > div.pc_temp_songlist > ul > li > span.pc_temp_tips_r > span')

for rank,title,time in zip(ranks,titles,times):

data = {

'rank':rank.get_text().strip(),

'singer':title.get_text().split('-')[0],

'song':title.get_text().split('-')[1],

'time':time.get_text().strip(),

'playUrl':title['href']

}

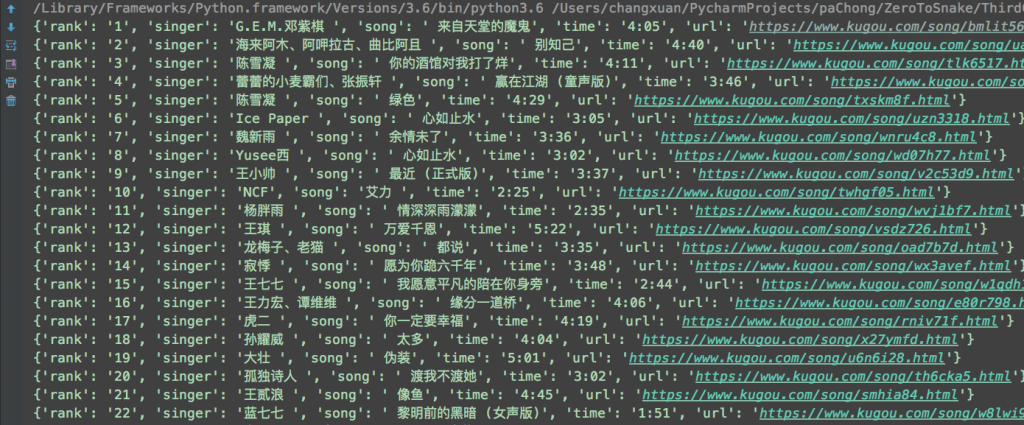

print(data)

if __name__ == '__main__':

urls = ['http://www.kugou.com//yy/rank/home/{}-8888.html'.format(str(i)) for i in range(1,24)]

for url in urls:

get_info(url)

time.sleep(1)

import requests

from bs4 import BeautifulSoup

import time

headers = {

'User-Agent':'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_12_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/66.0.3359.181 Safari/537.36'

}

def get_info(url):

wb_data = requests.get(url, headers = headers)

soup = BeautifulSoup(wb_data.text,'lxml')

ranks = soup.select('span.pc_temp_num')

titles = soup.select('#rankWrap > div.pc_temp_songlist > ul > li > a')

times = soup.select('#rankWrap > div.pc_temp_songlist > ul > li > span.pc_temp_tips_r > span')

for rank,title,time in zip(ranks,titles,times):

data = {

'rank':rank.get_text().strip(),

'singer':title.get_text().split('-')[0],

'song':title.get_text().split('-')[1],

'time':time.get_text().strip(),

'playUrl':title['href']

}

print(data)

if __name__ == '__main__':

urls = ['http://www.kugou.com//yy/rank/home/{}-8888.html'.format(str(i)) for i in range(1,24)]

for url in urls:

get_info(url)

time.sleep(1)

import requests

from bs4 import BeautifulSoup

import time

headers = {

'User-Agent':'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_12_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/66.0.3359.181 Safari/537.36'

}

def get_info(url):

wb_data = requests.get(url, headers = headers)

soup = BeautifulSoup(wb_data.text,'lxml')

ranks = soup.select('span.pc_temp_num')

titles = soup.select('#rankWrap > div.pc_temp_songlist > ul > li > a')

times = soup.select('#rankWrap > div.pc_temp_songlist > ul > li > span.pc_temp_tips_r > span')

for rank,title,time in zip(ranks,titles,times):

data = {

'rank':rank.get_text().strip(),

'singer':title.get_text().split('-')[0],

'song':title.get_text().split('-')[1],

'time':time.get_text().strip(),

'playUrl':title['href']

}

print(data)

if __name__ == '__main__':

urls = ['http://www.kugou.com//yy/rank/home/{}-8888.html'.format(str(i)) for i in range(1,24)]

for url in urls:

get_info(url)

time.sleep(1)